What is robots.txt

The robots.txt file is a text file that tells web robots (most often search engines) which pages on your site to crawl. It also tells web robots which pages not to crawl.

Finding your robots.txt file

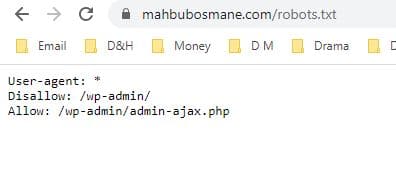

If you just want a quick look at your robots.txt file, there’s a super-easy way to view it. All you have to do it type the basic URL of the site into your browser’s search bar (e.g., mahbubosmane.com, bpoengine.com, etc.). Then add /robots.txt onto the end.

Finding Your robot.txt file

Usually, you can find your root directory by going to your hosting account website, logging in, and heading to the file management or FTP section of your site.

Note: If you’re using WordPress, you might see a robots.txt file when you go to yoursite.com/robots.txt, but you won’t be able to find it in your files.

This is because WordPress creates a virtual robots.txt file if there’s no robots.txt in the root directory. If this happens to you, you’ll need to create a new robots.txt file.

Creating a robot.txt file

Please visit this link to create a new robots.txt file. Also, You can create a new robots.txt file by using the plain text editor of your choice. (Remember, only use a plain text editor.)

If you already have a robots.txt file, make sure you’ve deleted the text (but not the file).

First, you’ll need to become familiar with some of the syntax used in a robots.txt file.

Google has a nice explanation of some basic robots.txt terms.

Optimizing robots.txt for SEO

One of the best uses of the robots.txt file is to maximize search engines’ crawl budgets by telling them to not crawl the parts of your site that aren’t displayed to the public.

For example, if you visit the robots.txt file for this site (neilpatel.com), you’ll see that it disallows the login page (wp-admin).

Since that page is just used for logging into the backend of the site, it wouldn’t make sense for search engine bots to waste their time crawling it.

if you want to tell a bot to not crawl your page http://yoursite.com/page/, you can type this:

Thank you pages. The thank you page is one of the marketer’s favorite pages because it means a new lead.

…Right?

As it turns out, some thank you pages are accessible through Google. That means people can access these pages without going through the lead capture process, and that’s bad news.

By blocking your thank you pages, you can make sure only qualified leads are seeing them.

So let’s say your thank you page is found at https://yoursite.com/thank-you/. In your robots.txt file, blocking that page would look like this:

Since there are no universal rules for which pages to disallow, your robots.txt file will be unique to your site. Use your judgment here. To read more details, Plz visit it

Test your robots.txt with the robots.txt Tester

Please visit this link to test your robots.txt

Relevant SEO Services By MahbubOsmane.com

Still, have questions? Or want to get a call?

Just fill-up the contact form or call us at +88 01716 988 953 or +88 01912 966 448 to get a free consultancy from our expert or you can directly email us at hi@dev.mahbubosmane.com We would be happy to answer you.

MahbubOsmane.com’s Exclusive Services